- Date

- 06 APRIL 2023

- Author

- VITTORIA MARTINOTTI

- Image by

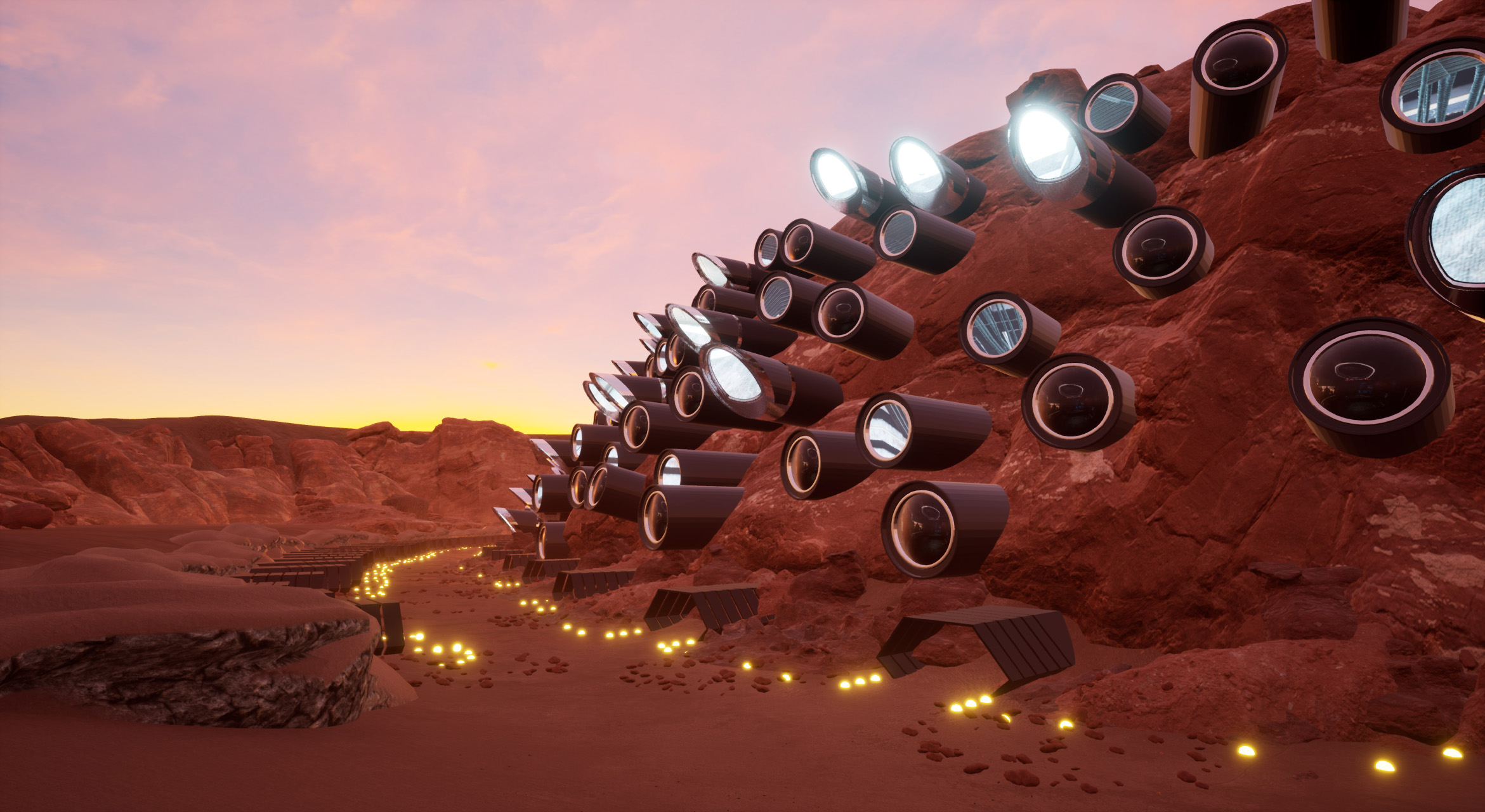

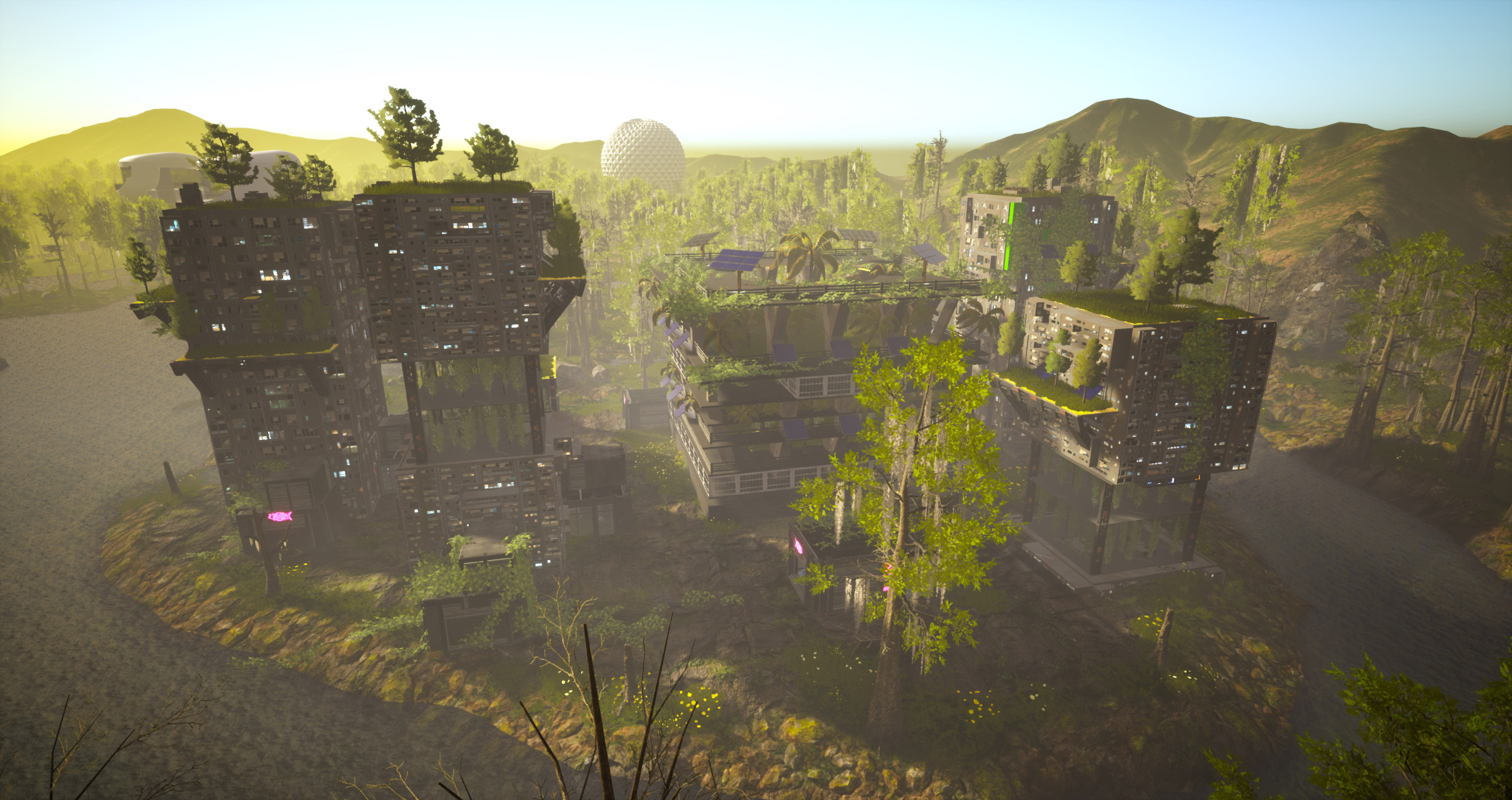

- @ALICEBUCKNELL

- Categories

- Aesthetics

On technology and humanity: Alice Bucknell and her alternative worlds

Alice Bucknell is a North American artist and writer based in London and Los Angeles. Working primarily through game engines, she explores interconnections of architecture, ecology, magic, and nonhuman and machine intelligence. She is the organizer of New Mystics, a platform exploring magic, mysticism, ritual, and technology. We’ve had the pleasure to engage in a fascinating conversation about her work and her plans for the future, while discussing her compelling research and approach to art making.

Before delving into your art practice, I would like to discuss your overall work. Besides being an artist, you are also a writer, an educator, and a developer, so how would you describe your practice? Do you feel that your multidisciplinary approach helps you shape your art production?

I tend to describe my practice as a kind of multidisciplinary world-making. Moving across different formats, from writing to teaching to curating, making video art and video games, and initiating collaborative projects and events like New Mystics (online) and New Worlds (Somerset House, 2022), my projects are always feeding into each other. There’s no real cut-off or dividing factor where one project ends and another begins; working in this entangled way means that certain thematic connections, like the relationship between ecology and technology, or technology and magic, are always being reframed and reoriented in different ways - it’s quite a kaleidoscopic practice.

Thinking across disciplines is a huge part of my approach, too - I tend to work at a systems scale, where attention is focused on the many and often unlikely ways things interrelate - so a work about swampland in Florida can scale up and out to a question of speculative finance, or the future agency of an artificial ecosystem; likewise, a work about the prospective human habitation of Mars might draw on interplanetary legal systems, the Scottish Highlands, or the problem with the language strategies we use to talk about outer space and our relationship to the cosmos. Working at this slightly confounding scale where you begin to see the world, not as a singular universal thing but as an ever-changing ecosystem comprised of many smaller worlds is, to me, crucial as a tool for speculating on alternative versions of the future that do not center the human.

As suggested by your previous answer, your work is very dense in theory, you often talk about drawing inspiration from sci-fi ecological theories, which is to me such a fascinating concept, could you please expand on that?

Sure! I have a background in social anthropology and research architecture, and I am pretty much reading something all the time - a lot of sci-fi, as you say, but also speculative fiction, climate fiction, and weird fiction (a subgenre of spec fic that focuses on nonhuman intelligence and multispecies protagonists). Speculative fiction and weird fiction are hugely inspiring resources for me in thinking ecologically; many of my favorite stories, like Jeff VanderMeer’s Southern Reach trilogy, Jenny Hval’s Paradise Rot, Huw Lemmey’s Unknown Language (written in collaboration with the medieval saint and mystic, Hildegard of Bingen), or Ursula K. Le Guin’s The Word for World is Forest, are excellent guides for anti-anthropocentric thinking. These texts have nonhuman characters or ecological protagonists that basically make a mess of human understandings of the world; it’s a tradition that stems from Gothic fiction, wherein encountering such implosions of “reality” as we know it makes the human characters lose their mind—think of H.P. Lovecraft’s Call of Cthulhu.

By “unlearning” through these nonhuman “ghosts” and “monsters” of a damaged planet, we can begin to understand how limited our own comprehension of the Earth, its ecosystem, and the inner workings of that ecosystem (its intelligence) really is. I think that by flipping the script and inverting this human tradition of rationalizing and making sense of the world to manipulate it, we can work towards a more equitable and sustainable future for the planet for more than one species. Pretty much all my work stems from this idea.

Following this thread I would like to ask if you could share what happens before producing a specific work, what impact do theory and research have when shaping a new project? And can you share some other studies that are dear to your heart and that you implement in your works?

I wish I could say I have a clear framework for producing each new project, but it varies pretty wildly from one world to the next. Some, like E-Z Kryptobuild, a project I made in 2020 that draws a comparison between the apocalyptic speculative finance models of architecture and cryptocurrency in a reality TV-style mock game show format, come out of years of research simmering away on a backburner in my mind. For a few years, outside of my editorial day job, I was an architecture journalist, and across a number of press trips to new building launches, I became fascinated by the future-leaning language strategies that celebrity architects would use to describe their work. This is particularly true of those working in the tech circuits of Silicon Valley campus design or libertarian-style floating artificial islands of the “seasteading” community: they’d herald a pretty garbage and carbon-heavy building shaped like a cosmic ring or lilypad as the future salvation for humanity, citing its underwater algae solar farm, green roof, or blockchain-powered self-governance system. The final result of that project came together pretty quickly, over just a couple of months, as I already had so much material to work with—also, these delusional architects are a pretty easy target.

Other projects like The Martian Word for World is Mother and my newest WIP project, The Alluvials, which is set in a near-future version of LA and speculates on the future of the water crisis from a more-than-human perspective, take much longer to draw together. This is partially because there is so much research to be done from many different angles, and also, as these projects attempt to get away from more human-centric analyses of their subject matter through collaborations with AI and other kinds of nonhuman intelligence. When I’m making a world, I’ll be taking a bunch of different approaches simultaneously: experimenting with AI tools, creating a speculative environment in a game engine, reading fiction and nonfiction, inputting some of these readings into language and text-based AI models, writing the script… All of these activities feed into each other pretty organically, and oftentimes I’ll reconfigure things and run the AI models a bunch of times over until I get something that sticks or feels good. And sometimes I’ll uncover a piece of research that completely changes my approach, so the narrative will split off and re-emerge in a different context. It’s a really nonlinear process! On that note, thinkers who tackle topics around world-making or worlding, like Donna Haraway, Karen Barad, Federico Campagna, Ian Cheng, and Patricia Reed, are really important to me, in thinking about worlding as a process of eternal ongoingness, and something that you can’t really have total agency over. When the work starts taking you to unexpected places and you lose some of that control, to me that’s a sign that things are going to get good.

You once said that artists are practitioners of osmosis, that they reflect and absorb cultural trends that emerge in contemporary culture to respond to them in real time. How do you feel that this statement applies to your practice?

Well, my work tends to take up big issues—the “wicked problems” facing us in the present, such as the climate crisis, ecological collapse, accelerated capitalism, the tech bro’s desire to dominate other planets, water scarcity, the future implications of AI, multispecies extinction. You can also think of these issues as what philosopher Timothy Morton calls “hyperobjects”—complex, multiscalar conditions that occlude easy pinpointing or ready solutionism. I do think that it’s an important role for artists to play in terms of responding to these conditions, not in the sense that they future-cast or propose “solutions” to these multiple overlapping crises, as there’s clearly no easy fix, but rather to open up an expanded take on these conditions and allow us to better understand them through multiple perspectives.

I’ll just say one more thing about the real-time response. The speed both at which this tech advances, and that these “wicked problems” evolve, advance, and become increasingly urgent, means that my work is responding quite quickly to the conditions of the present. I do think it’s important for artists to engage with these conditions, but if the response is *too* tethered to the present, that does sometimes foreclose the possibility of imagining alternatives. Likewise, if the response is set too far into the future, it can quickly disintegrate into something abstract - like far-flung science-fiction vs the more recognizable social/cultural territory spec fic. I tend to situate my work in an unspecified but near-future moment, that has distinct elements of the present encased in a bit of future-speculative, for that reason.

In your work “The Martian Word for World is Mother” among the various themes you tackle, you address questions on the limitations of language, more precisely the need for a non-human language to better understand AI and machine-generated ways of expression. Can you tell us more specifically how and why you think this “lost in translation” effect with conventional language happens and why it is problematic?

Sure—I think about language in a pretty anthropological sense, as a kind of worldmaking tool; our understanding of a singular “consensus reality” is basically modeled through the language we use to describe it. So once language starts shifting, our idea of the world—and the world itself—begins to morph, too.

In arguing for a multispecies language, one not rooted in the sole domain of the human, I believe we can become attuned to other forms of knowledge that are ignored by a human-centric language system. For me, the use of language-based AI models like GPT-3 is an exciting step in this direction, a kind of opportunity to “hallucinate” with language and to understand it not as a simple tool of communication but as something weird and, at times, disorienting.

This becomes most apparent when the language model falters—it might not get the correct understanding of a prompt, or the output tailspins in its own linguistic feedback loop—in order words, the AI starts speaking nonsense. “Nonsense” is actually just a different form of sense-making that maybe doesn’t make sense from a human perspective but shouldn’t be seen as less valuable for that reason. Although I worry that opportunities to engage with the linguistic glitch or “nonsense” will be increasingly rare as these models are fine-tuned towards human-centric productive processes (GPT-4 is being released this week!), I think hanging out in a linguistic latent space where words shed some of their conventional associations and take on new meanings is a valuable reference point to understand language as an infinitely mutable code. What new worlds could we envision if we co-create a new language structure that doesn’t center on the human?

Thanks for sharing this really interesting take! I have noticed that while talking about your work you often say that you “collaborate” with game engines and AI, I think that the use of this precise word is remarkable, so I was wondering if collaborating with others or otherness is important in your overall practice and if you could expand on your collaboration process with AI and technology, is it in any way shaping your production and creative process?

Recognizing the degree to which working with AI models is collaborative, and being particular in the language I use to frame that form of working together is really important to me. It’s also something of a resistance against seeing these technologies as a utilitarian tool that lacks agency, which is how the mainstream conversations around AI go: either seeing it as some destructive superintelligence that will eradicate human abilities or a dumb technological hat trick that will never create at the same capacity as its human users. This language, and framing of course, comes out of an anthropocentric approach to AI, who it is for, and what it can do.

Furthermore, a lot of my scriptwriting process in recent work like the Mars project and The Alluvials is training the model on seemingly disparate texts—a press release from SpaceX versus an eco-feminist essay from Donna Haraway—and seeing what emerges in the latent space of worlds and words colliding. This creates a logical short circuit in the human brain, even if you try your best to resist compartmentalizing reality and probability statistics and preventing frictions, all skillsets that human language has practically perfected—but when run through an AI model, new paths and ideas are created in the latent space of obvious contradictions. So a lot of the scenarios in my projects that seem to hinge ludicrously across dystopia and utopia—another set of unproductive binaries we’ve created—are actually machine-generated. Honoring the author of these ideas as a nonhuman collaborator is significant to the stakes of my work and the process in which it is created.

In your work, you deal with themes such as post-colonial and post-anthropocentric imaginaries while testing the limits between ecology, AI, architecture and sometimes also magic. Do you feel it is important for you to constantly investigate and come up with alternative scenarios?

Yes, personally I find it a very exciting and necessary kind of practice in the present. I should also reiterate here that my work is absolutely not trying to future-cast a singular vision or offer immediate solutions to the present, which is an impossible and dangerous brand of solutionism that we know well enough already from the technosolutionists in Silicon Valley. Rather, I’m using speculation to expand the atlas of shared understanding for what a future, or indeed multiple futures, could look like.

On that note, I am a big fan of the writer Ursula K. Le Guin, and I also love her approach to speculative fiction: as a descriptive, not predictive, practice. In all of her worlds, which are ostensibly set far in the future, perhaps in other galaxies, she basically uses a model of the future as a metaphor or cipher for better understanding the wicked problems of the present: societal collapse, ecological ruin, economic extremes, fascist politics, unproductive binaries for self-identity, etc. To me, it’s very important to think about speculative fiction and worldmaking as two approaches for better orienting ourselves to the present, rather than conjuring escapist utopian futures that will never arrive.

I would like to finish this interview by asking you about the future, as mentioned before your work is characterized by meticulous and relevant research, especially at this very moment, so where do you see your research taking you in the next years?

Great question! And clearly, I’m a terrible future forecaster, because I don’t have a singular answer for you. I would love to weigh in on the future creation of the AI models I use like Large Language Models (LLMS), as well as be a part of the production and testing of smaller-scale, artist-led AI tools. I’ve recently had the pleasure of working with The New Real, an AI artist-led research organization based out of the University of Edinburgh, beta testing their version of word2vec, a language model, for a new mini-project. Being able to test out the model on a project about language, the climate crisis, and hurricane forecasting has been a really interesting experience, in that I’ve been able to chat with the model’s developers while having more agency in the stakes of the AI-human collaboration than an LLM like GPT-3/3.5. Thinking on the other end of the spectrum, I would also love to continue my collaborations and conversations with scientists researching more-than-human approaches to language and intelligence, such as some of the interesting research at the moment around the intelligence of slime molds and other fungi, plants, and animals that possess a networked or rhizomatic intelligence, or the language of animals using sonar, like bats, whales, and dolphins. I’d also like to continue creating worlds in my own art practice, of course, and teaching across disciplines, along with the themes of language, nonhuman intelligence, and posthuman world-making.