- Date

- 21 JANUARY 2023

- Author

- DAVIDE ANDREATTA

- Image by

- Categories

- Aesthetics

Barcodes, commodities and animist ontologies: AI perspectives with Surea.i

Through a prolific and polymorphous cooperation with AI itself, surea.i (@surea.i) explores how perspectives and digital embodiment interact. Her creations explore surrealism, symbols and the execution of ideas that would not be possible without the use of AI-driven image synthesis models. She is interviewed here about her journey in using AI to make art, as well as her thoughts on "AI vision" and the future of information.

I have seen as many AI produced images as a human eye can possibly endure. Closing my eyes doesn’t help: my eyelids are being harassed even when lowered. However, there is one project by @surea.i that is making me reconsider the very same way my pupils should welcome the world.

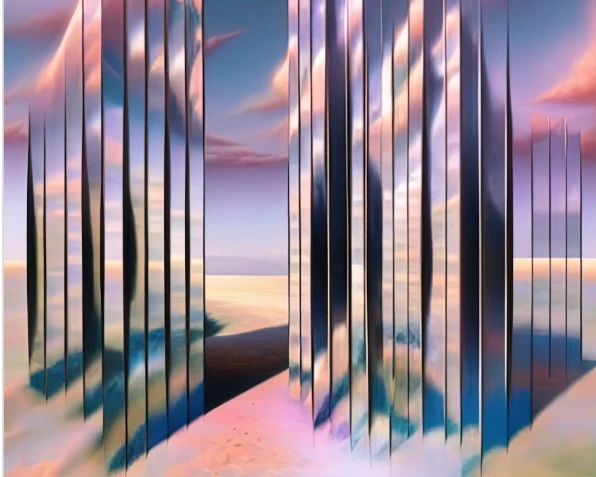

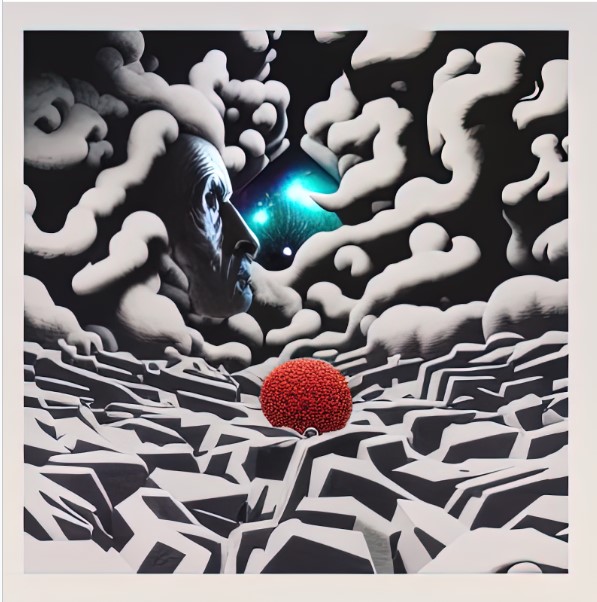

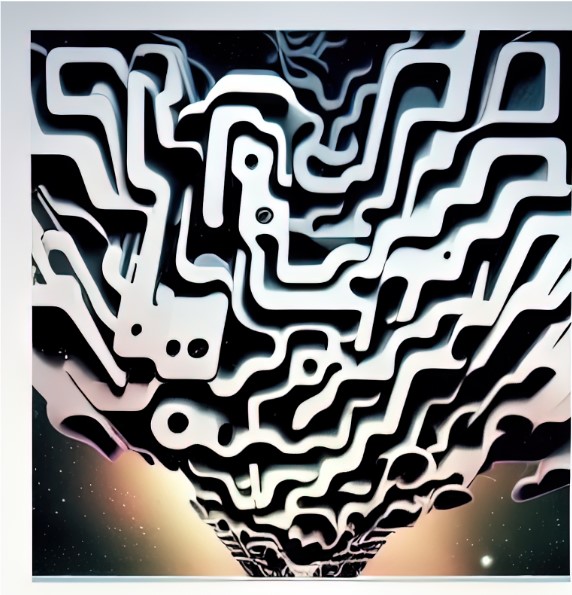

Using StableDiffusion within Stability AI’s DreamStudio Web UI, @surea.i fed AI with some barcodes and QR codes as initial images to act as a starting point: half hallucinations, half machinic dreams, the results invite us to reconsider what it means to look at what is commonly considered the most anonymous, commodified and sterile form of identification.

Starting with the way they are grouped, problems «will take altogether different forms depending on the ontological, cosmological, and sociological contexts in which they arise. [...] Lévi-Strauss provides an example when he compares the methods of investigation adopted by the Spanish and the Amerindians when faced with the question of their respective humanity. While the churchmen wondered whether the savages of America possessed souls, the Indians of Puerto Rico immersed the whites that they captured in water for weeks on end to see whether they would rot. The former posed the problem of the nature of man in terms of moral attributes; the latter did so in terms of physical ones» .

And it is very evident from these images that the questions that AI posits to barcodes are very different from ours. We don’t usually spend much time looking at these kind of identification tools and, when we do, they remain an afterthought at best, a trigger for self pity at worst (Ted Kaczynski, I’m looking at you!). On the other hand, AI’s gaze explores a landscape that shows no flatness: colors, differences in altitude, shadows, dreamy depth are the ingredients of a landscape innervated with reflections.

For AI, barcodes and QR codes aren’t a method of representing data, they aren’t prosthetic limbs used to enhance a cashier’s body. They are a meeting point, a tourist attraction for machinic visitors, living flesh waiting for a contact to be established.

Benjamin was convinced that forces of liberation were inherent within things, that in commodities, with their fetishization, material drives, affects and desire were intertwined; he fantasized about igniting these compressed forces; he hoped for a participation in the symphony of matter. This is exactly what might be at stake with these images: AI making the chord of matter visible for us, granting us a front row seat at this concert that has been playing for centuries (in this performance we are actually more a supporting cast, a community, rather than just an audience).

The ability that AI has to release these inorganic drives might be a byproduct of its constitution. In animist ontologies «a soul is thus the concrete and quasi-universal hypostasis of subjectivities that, however, are definitely singular since they proceed from forms and behavior patterns that determine the situation and mode of being in the world that are peculiar solely to the members of the species collective that has been endowed with those particular attributes. This interiority is shared by almost all beings, but the mode of its subjectivization depends on the organic envelopes of the beings that possess it. That this is a strange paradox is shown by Viveiros de Castro when he writes of Amazonia as follows: “Animals see in the same manner as we do different things from those that we see, because their bodies are different from ours.”». The biases are the same, the imagination is shared and yet possessing a different body (technology is always embodied) from ours, AI sees in the same manner as we do different things from those that we see. We both look at a barcode and where we see a set of parallel lines, AI sees a parallel universe.

Hi, @surea.i, I have to admit that I was incredibly impressed by this project of yours and it might as well be the single most interesting thing I saw after AI diffusion models’ spread. What sparked your interest in barcodes and QR codes?

When I first started playing with diffusion models, my interest was piqued by Perlin noise, a procedural generation algorithm developed to generate textures in computer graphics. Images generated by AI Diffusion and GAN models begin with Perlin noise. To the human eye, Perlin noise looks like the type of static you might see on a TV screen, but to AI, it holds virtually infinite possibilities, depending on what is suggested by its operator.

On Twitter, the AI art community had been doing “SeptembAIr”, something similar to what “traditional” artists do with “Inktober”, where artists are challenged to create something new every day, often with a pre-determined theme. One such daily theme for this event was “galaxy”, for which I opted not to generate anything at all, instead posting a screenshot of Perlin noise https://red-eye.world/<a href="https://twitter.com/sureailabs/status/1568600180793958400" rel="noopener noreferrer">https://twitter.com/sureailabs/status/1568600180793958400</a>. The idea was two-fold, that Perlin noise in particular was sort of galaxy-like, with brighter and darker spots. It also represented a starting point for a virtually infinite number of outcomes, contingent on the prompt that the AI is asked to iterate on, similar to the virtual infinity that a galaxy represents to the human eye.

Later on, I was curious about what other types of images could serve as something similar to Perlin noise, at least to where human perception is concerned. This is where QR codes and barcodes came to my mind; they are both essentially “static” to the human eye. Both are recognizable as their respective category entities (i.e. a QR code or a barcode), but neither are ever recognized as unique entities depicting specific information. I was curious as to whether I could coax the Stable Diffusion AI into hallucinating something interesting if given QR codes and barcodes as starting images, especially given that unlike Perlin noise, the contrast in them is so much starker.

While working on this project, I was also thinking about compressed information, and how this is arguably part of the nebulosity of AI-assisted synthesis and controversy of AI overall.

There is a misconception that when an AI model generates an image, that it is “sampling” from images it has seen during its training (or more misinformed yet, from a massive “database” it is privy to at all times), when the reality is more complicated, and involves a great deal of compression of information to a format that is incomprehensible to the human mind. Similarly, barcodes and QR codes compress information (though much simpler than an AI model) into a format that can only be reliably read by machines. I found it very interesting to think about a future in which no one can understand the world the same way machines understand it, and yet they become an inextricable part of our lives and means of communicating information.

You told me that you were one of the early alpha testers for Midjourney and StableDiffusion and that you were using AI to make art months before those two landed on the scene. Can you expand on that?

In November 2021, I started playing around with NeuralBlender, a primitive web-based platform for AI image synthesis. At the time, diffusion models were primitive and VQGAN was still having its hayday. I remember being so excited by the prospects of this new tech that I bit my tongue while trying to fall asleep one night. I immediately started generating a ton of work and sharing it on a dedicated AI art account I called “surea.i”, a mixture between “surreal” and “AI” (pronounced “Sir A.I.”). I was initially posting curated pieces 6 times a day on a schedule, before slowing down and switching to one piece per day, where I would do some manual editing to suit my specific style.

In late 2021 and early 2022, there were far fewer folks on social media (particularly Twitter) whose sole purpose was to share and talk about AI art and image synthesis. Even fewer were trying to cultivate a personality to go along with it. For me, surea.i was supposed to be catty, irreverent and a bit arrogant; the things that seem to sow engagement on Twitter.

In early 2022, myself and the AI art community online were abuzz with folks using Google Colab to run Disco Diffusion and Jax among others. But my follower count didn’t really start to rise until new user-friendly platforms like Midjourney, DALL·E 2 and later Stable Diffusion hit the scene. While these were still in closed beta, access to their image generation tools was contingent on mysterious forces and invites to their platforms were exclusive. You had to hope that you’d get hand-plucked off the waitlist by someone at Midjourney, or later, at Open AI for access to DALL·E 2 and later yet, at Stability AI for Stable Diffusion.

Having a headstart with the early tools led to my being part of the first wave of beta testers for Midjourney. Once I was in, it was easy to rack up a few thousand followers by posting whatever “experiments'' I thought looked cool that day. Midjourney’s closed beta phase lasted several months, during which time I also got my beta invitation to DAL·E 2 and what would eventually become Stable Diffusion.

I’ve been kicking around the AI art community for over a year now, notably contributing to a well-known collaborative project known as the Image Synthesis Style Studies (hosted at parrotzone.art). I’ve had the privilege of not only watching all these new tools develop and change in real time, but to be part of testing them as well. I guess it paid off to become obsessed with this tech early, at a time where everything still looked like spaghetti. It’s incredible how quickly image synthesis has gone from a fun curiosity for making cursed pictures, to a legitimate threat to the livelihoods of “traditional” artists.

In the article I discuss the commodity form, liberation and desire: what kind of relationship do you think there is between AI and NFTs? I don’t know if you’re particularly interested in NFTs, but they seem to constitute the inevitable destination for many AI produced images…

Ah, yes… NFTs. Despite having collected a few just to see what it was like for myself (which, hilariously, has been used by anti-AI art types to malign me as an “NFT guy”), I haven’t ventured into selling my own art in this format for a few personal reasons I won’t get into. I can say that the AI art community is deeply steeped in the NFT world. It makes sense to me, especially when selling art that is natively digital in the way that AI-generated images are.

There are certainly other parallels between AI and NFTs that can’t be ignored. Both represent new alternatives (and potential threats) to the status quo in a myriad of spheres: art, technology, ownership, intelligence, and value. Both are poorly understood by a wide swath of those aware of them, and arguably by many who use AI or traffic in NFTs as well. Both AI and NFT represent uncertainty and instability in our view of the future. It’s very much the “wild west” of both these technologies right now, sort of like how the internet was in the 1990’s. No one really knows exactly what roles these technologies will play in the coming decades, though there seems to be a general consensus that at least one of them, if not both, are here to stay.

Any new project you’re currently working on and that you can share with us?

After some health complications (and just plain burnout) a few months back, I took a long hiatus from treating AI image synthesis like a job or serious hobby. For a long time I was just playing around and delighting in the imagery, or trying to get a reaction out of my audience. I’ve only just started to get back to what one might call projects.

I’ve been fascinated by Nijijourney lately, which is an anime-oriented model still in closed beta developed by the folks at Midjourney and Spellbrush (known for Waifu Diffusion). It’s great at anime, of course, but I keep probing the model to see what “edge case” uses it may have where it outperforms Midjourney.

Back in July I started a thread where I’d try and recreate Twitter users’ profile pictures using only text prompts. It was a popular project and people loved seeing how someone might describe the “face” they put out to the world. I recently decided to replicate this thread using Nijijourney (here: https://twitter.com/sureailabs/status/1594154356714401793) and am similarly having lots of fun recreating anime-style versions of Twitter profile pictures.

I’m also working on revitalizing my instagram feed (@surea.i), which is what I’d consider my personal portfolio as an “AI artist”. I haven’t posted anything since late September, which is only about 2 months from the time of this writing, but is seemingly an eternity in AI-time. I’m currently searching for my next artistic process “phase”. Starting in April, I had previously become caught up in a specific artistic process where I would take an initial image (usually generated in Midjourney) and run it through Disco Diffusion (via Google Colab) a few dozen times with different prompts. Then I would blend together the details that I liked most from all these iterations into one composite image. This became unfeasible for me after: a) Google Colab changed their monetization system for GPU usage meaning users no longer had unlimited compute, and b) I had to quit my jobs due to the aforementioned health issues, meaning I could no longer afford to run iteration after iteration in Disco Diffusion anyway.

My next phase could go a few different ways and I expect to be posting new work to my IG portfolio soon. I’ve recently become obsessed with using old “drafts”, or AI-generated images I never posted or used for anything, and using these as a starting point for newer models to interpret in different contexts. Doing the same with old pieces is fun too, though a little bit more difficult because of the sentimentality involved in working with a cherished completed piece.

I loved to use Procreate for my previous composite work and I’m returning to it to “collage” together elements from drafts. One project I’m working on right now is something I’m calling “Bismuth Dreams”, where I take advantage of the newer models’ abilities to generate clean, vector-like objects with a consistent look to them. The look is this sort of metallic neon, copper verdigris-type sheen, and I plan to create a sprawling collage out of all these images. They’re stunning on their own, and I wish to put them all together in a tableau one could get lost in. The name “Bismuth Dreams” is interesting to me because of the dramatic difference between how bismuth appears in nature and how it appears when it is lab-made. I think there are parallels to be drawn between bismuth and the dichotomy of “organic” art in comparison to this new medium of “AI” art.

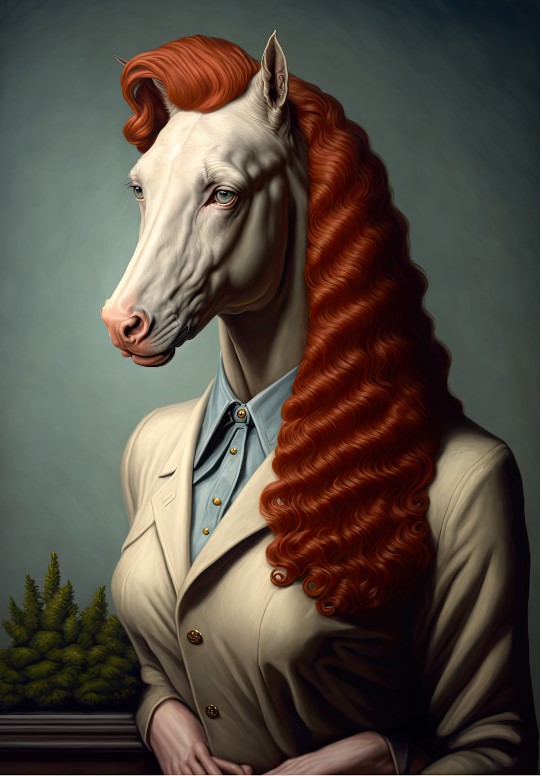

I’ve also become a little obsessed with a certain red-haired character I pulled out of the latent space recently. She doesn’t have a name yet, but I’ve been experimenting with inserting her into various new contexts to see how AI interprets her presence. Currently, I’m enjoying taking my old portfolio pieces and including her portrait with them as image prompts. Since I started using AI to make art, I’ve wanted to create a “surea.i universe” with recurring elements. For a long time it was difficult to regenerate the same character across several pieces, but this is now no longer the case.

And of course, perhaps I’ll go back to my earlier “roots” where I would post AI-generated images with minimal editing, and perhaps uncouple myself (again) from the guilt of “not working hard enough” when I use AI to create my art. That seems to be one emotion critics can’t yet get a handle on for themselves.

Surea.i’s work can be seen on twitter @sureailabs, where she posts off-portfolio work or on Instagram @surea.i, where she keeps it “art-only”.

Inteview by Davide Andreatta

Image Courtesy of Surea.i