- Date

- 21 OCTOBER 2022

- Author

- GLORIA MARIA CAPPELLETTI

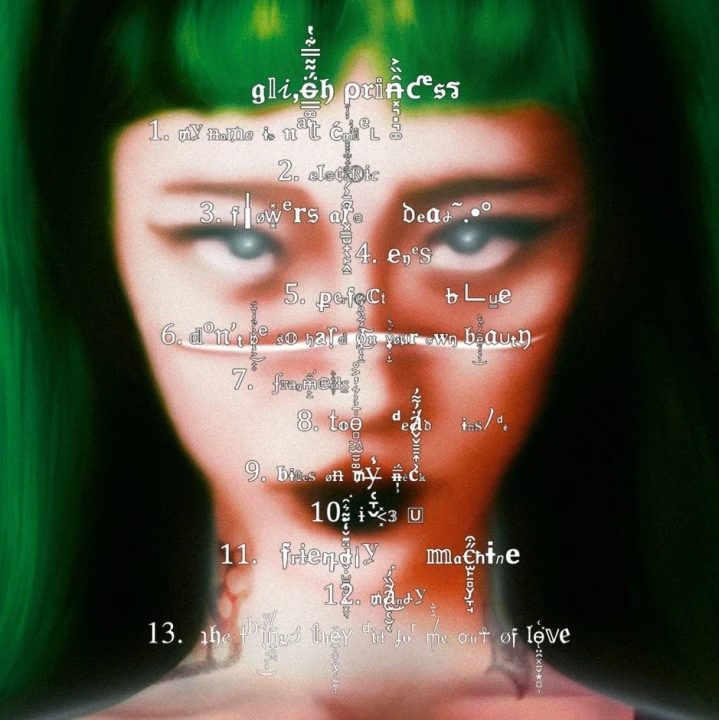

- Image by

- YEULE BY NEIL KRUG

- Categories

- Music

Music In The Age Of The Algorithm

Artificial Intelligence (AI) is rapidly becoming a default tool to create music, contributing to music industry infrastructure, co-creation, collaboration, and offering technology to anyone wanting to create music, regardless if they are musicians or not. The applications of AI in music production raise different possibilities and concerns about music production processes and where artists fit into this new age.

For better and worse, artists and researchers are already experimenting with AI in an attempt to propel music to new heights. Many now see AI as an accomplice to making music, augmenting the music, democratizing the playing field: it allows artists to experiment with music in ways that in the past would have required working with a number of technical and musical collaborators, and very high significant budgets. Today, Artificial Intelligence (AI) has multiple uses in music, enabling it to rapidly (and without musical training) create music that is very similar to the music made by humans.

Nowadays, there is a new concept of todays schools of music in artificial intelligence and big data, teaching, and using AI technologies in arts education, in order to promote growth of music education. The period of wireless networks and AI technologies has been progressively permeating the education as the new means for the education of innovations, providing new ways to think about reforming music education teaching in colleges and universities. Technology has been changing music, and more generally, the arts, for generations.

AI technologies and applications have grown considerably more widespread and functional, increasing data volumes and advanced algorithms, and providing improvements to computing power and storage, all commodities needed by music makers. The use of AI in music composition, performance, theory, and digital audio processing is being researched right now, with a number of music software packages being created to do the same.

For instance, in music composition courses, AI systems could efficiently supplement students musical composition materials, and allow more comfortable conditions for students to compose music. The so-called interactive composition method, whereby the computer produces music in response to a live musicians performance, is also powered by AI. In music, AI has been used to timbre, or tonal translation, by allowing vocalists to use their voices as synthesisers, singing into a piece of software which converts the tones to sounds from another instrument

ORB Composer is targeted towards artists interested in experiencing the possibilities of creating music using AI, and discovering new styles of music. Both expert musicians and music lovers can make use of this iOS-based application for creating new melodies within minutes. You can create a video soundtrack using AI Music Composers, as you can upload a piece of music you have already recorded and make variations on it.

Even with the growing number of artists using it, music composed by AI is still far from perfect. The AI algorithms used by Muzeek analyse videos you are creating music for, and Muzeek produces tracks that fit the beats perfectly in a videos beat. In late September, Harmonai released Dance Diffusion, an algorithm and set of tools that generates musical clips, training itself on hundreds of hours of existing songs.

The arrival of Dance Diffusion comes several years after OpenAI, the San Francisco-based laboratory that created the song-generation software DALL-E 2, detailed their big music-generation experiment, Dub Jukebox. The company Space150 has been making waves with TravisBott, an artificial intelligence model trained on MIDI data based on Travis Scott’s original music and lyrics, without the artist's permission, which is raising huge ethical questions. YouTube star Taryn Southern, who has more than 700 million views, released the first-ever album composed and produced completely by artificial intelligence, using AIVA, Amper Music, IBMs Watson Beat, and Googles Magenta.

AI in music has the potential to expand creativity, opening doors for new paths. Now that this future has arrived, what’s next? Various industries are taking up the opportunities AI offers, and you can see AI being actively used in education, healthcare, cosmetics, and, of course, music. AI in music can be seen as a tool to democratize music, making it easier for new artists to break into the industry, and this is always a good thing.

Any announcement about new AI-powered music technologies comes with the inevitable hand-wringing over the future of creativity, and whether automated tools are a threat to the human expression of art. At a more general level, I would say we need to think hard about what is the appropriate role for AI in music, and what claims can reasonably be made for AI/music systems, if we are serious about music as a human-expressive art form. It is also essential that we study and understand emerging technologies like artificial intelligence with an open mind, learn scientific methods for its usage, and acknowledge the values and limitations of the technology as auxiliary tools, to mitigate the meaning of education and the role of music teachers in this age of artificial intelligence and computer-human interactions.

Let us look at AI-powered tools that have been successful at helping musicians break writers blocks, spur new ideas, aid music making, and achieve greater creative output. We are celebrating successes and disruptions, from performances by artificially composed Beethoven-esque string quartets, to the flow machines and impressive, completely computer-generated pop songs (or pop musical pieces) such as "Daddys Car," to scripts written by AI such as "Sunspring". We are going to see (and hear) computer models of expressive play - including, if circumstances allow, live demos of collaborative human-machine piano performances - and even hear from one expressive-playing system that is claimed to have passed the musical Turing Test.

At the same time, artificial intelligence could learn characteristics of various styles of songs from the training contents, thereby helping humans with individualized musical composition, and vastly enriching genres and styles of music. Loudly AIs music studio is powered by generative adversarial networks, which are trained with 8 million musical tracks and 150k audio sounds created by professional producers -- the algorithm is then capable of creating sophisticated compositions in less than 5 seconds. To some, the idea that computers are creating music by algorithms instead of being woodshed by artists seems heresy. How do you feel about it?

AI-Generated text edited by Gloria Maria Cappelletti, editor in chief, RED-EYE

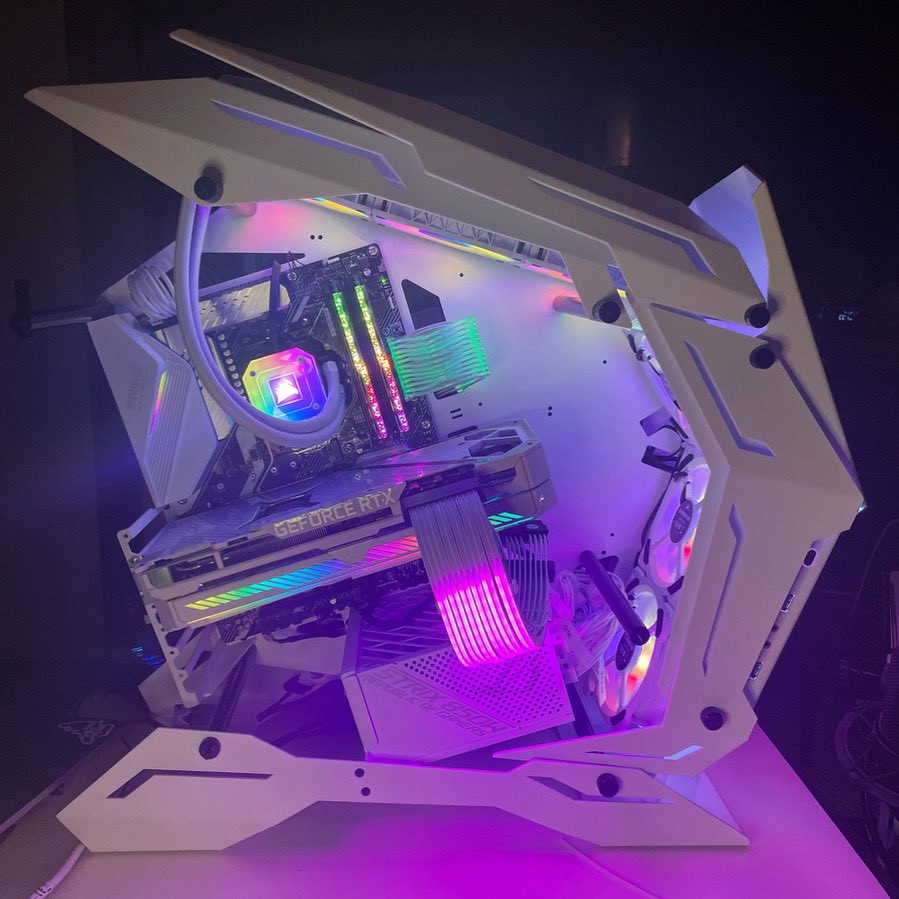

All images from Yeule Glitch Princess, Twitter Account

FOLLOW RED-EYE https://linktr.ee/red.eye.world